I took an ambiguous requirement—“optimize for mobile”—and translated it into measurable product improvements that increased conversion by 8%.

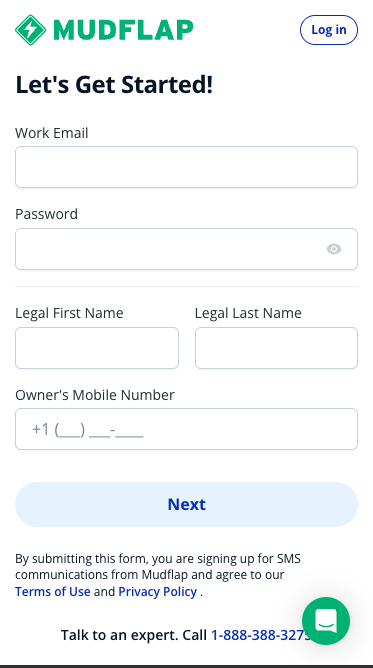

The initiative had two starting points: a PM flagged that a page in the dashboard looked off on mobile, and separately, an Engineering Director gave broader guidance to optimize the experience for mobile users. I used both signals to scope the problem and build alignment on what “optimizing for mobile” should actually mean.

I started by grounding the problem in usage data rather than assumptions. Using Amplitude analytics, I segmented our mobile traffic by device model and viewport distribution to understand real-world constraints. This allowed me to define a practical design target around the 375px logical width breakpoint, while excluding edge-case devices that represented <1% of total usage to avoid over-optimizing for statistical noise.

From there, I audited ~70 user-facing views across the application, systematically evaluating layout, responsiveness, and content hierarchy at the 375px viewport. I identified recurring failure patterns—text truncation, misaligned components, and degraded visual hierarchy—that directly impacted readability and user flow.

I partnered with design to align on high-impact fixes and independently implemented frontend changes where appropriate, particularly in cases where iterative UI adjustments could be made safely without design debt. This included improving spacing systems, responsive breakpoints, and simplifying key interaction surfaces to reduce cognitive load on smaller screens.

The audit also surfaced several core design system components that warranted deeper structural overhauls. We made a deliberate call to defer that work. Pursuing component-level redesigns at that point would have concentrated effort on isolated parts of the flow at the expense of overall progress—the risk was getting bogged down polishing one component while the broader experience remained broken. Instead, we focused on legibility and satisficing component behavior: ensuring components were functional, readable, and unobtrusive on mobile without blocking the initiative on achieving perfection. The deeper overhauls were logged and deferred to a later phase where they could be addressed properly in the design system rather than as one-off fixes. In the following quarter, I returned to those components and delivered major redesigns across Tooltip, Toggletip (rebuilt for mobile interaction patterns), Table, Filters, Select/Dropdown, Badge, and Icons—improving responsiveness, adaptability, branding alignment, and accessibility across the board. Much of what I learned from that work fed directly into my own personal design system library, Marq.

To validate impact, I worked with the product and data teams to structure an A/B test, isolating the mobile experience changes against a control group. We measured downstream conversion metrics and confirmed an 8% lift in conversion attributable to the improved mobile UX.

Overall, I drove the initiative end-to-end—from data-informed problem framing, to UX diagnosis, to implementation and experimental validation—turning a vague optimization request into a measurable business outcome.

Chapter 2: Performance

With the layout and UX issues addressed, I turned to load performance—specifically, how fast the funnel could get usable content in front of a user on a mobile connection.

The first lever was page-level code splitting. The funnel serves two meaningfully different user populations: new users going through onboarding, and returning fleet users accessing the dashboard. These paths have different dependencies, different interaction patterns, and different performance budgets. Splitting at the page boundary meant each route only loaded the JavaScript it actually needed, keeping initial bundles lean and aligning resource loading with real use cases rather than shipping the entire application to every visitor.

Alongside splitting, I introduced a caching strategy that separated long-lived assets from frequently changing ones. Core libraries—frameworks, utilities, anything with a stable version—were given long cache TTLs, so returning users and users navigating between pages paid no network cost for assets they’d already loaded. Application code, which changes with every deploy, was kept in a separate chunk with a shorter cache lifetime. This separation meant cache invalidation was scoped and intentional rather than a blunt instrument.

The second lever was lazy loading for bundle-heavy tracking and analytics code. Third-party tracking scripts were among the largest contributors to initial bundle weight, and they have no bearing on what a user sees or interacts with when the page first loads. Deferring them until after the critical path improved LCP directly—less JavaScript to parse and execute before the browser could render meaningful content.

I instrumented and measured the impact using AWS CloudWatch RUM rather than running a formal A/B test. The key reason for choosing RUM specifically was to capture real-world usage data from customers in the field—not synthetic benchmarks or lab conditions, but actual LCP and load performance as experienced by real users on real devices and connections. Selecting the right tool required its own analysis—I evaluated DataDog, Amplitude, and PostHog alongside CloudWatch. DataDog offered the most comprehensive observability suite but came with significant cost overhead that wasn’t justified for initial performance measurement. Amplitude and PostHog are well-suited for product analytics and event tracking but weren’t the right fit for infrastructure-level performance metrics. CloudWatch won on pragmatic grounds: we were already on AWS, IAM provisioning was trivial to wire up through existing Terraform infrastructure, and the cost was negligible. The right tool isn’t always the most powerful one—it’s the one that fits the context. That was a deliberate tradeoff. Code splitting and lazy loading are low-risk, well-understood techniques with no realistic downside path—there is no version of “we split the bundle and it hurt users.” The engineering cost of the changes was low and the directional outcome was predictable. Structuring an A/B test would have added coordination overhead, delayed the work, and consumed data team capacity, all to confirm something that didn’t need a controlled experiment to validate. LCP metrics before and after were sufficient evidence. A/B testing is the right tool when you’re validating uncertain product decisions against user behavior—not when you’re removing unnecessary weight from a page load.